Another point that is very important to understand is the time to recover the failure. And when this occurs, and when your failgroup returns, there are differences. The detail is that between the failures, the databases continued to run and change data in the disk. But the problem occurs if you have one failure (like CELLI03 diskgroup disappears) and after some time another failgroup fails (like CELLI07). If you have lucky and the failures occur at the same time, you can (most of the time) return the failed disks and try to mount the diskgroup because there is no difference between the failed disks/failgroups. Usually when this occurs, if you can’t recover the hardware error, you need to restore and recover a backup of your databases after recreating the diskgroup. In the diskgroup DATA above, after the second failure, your diskgroup will be dismounted instantly. Understanding the failure Unfortunately, failures (will) happen and can be multiple at the same time. In case of failure that just involves CELLI02 and CELLI03 failgroups, your data (from DBA19c) can be intact. And if your database is small (and your failgroups are big), and you are lucky too, the entire database can be stored in just these two places. So, some blocks from datafile 1 from DBA19, as an example, can be stored at CELLI01 and CELLI04.

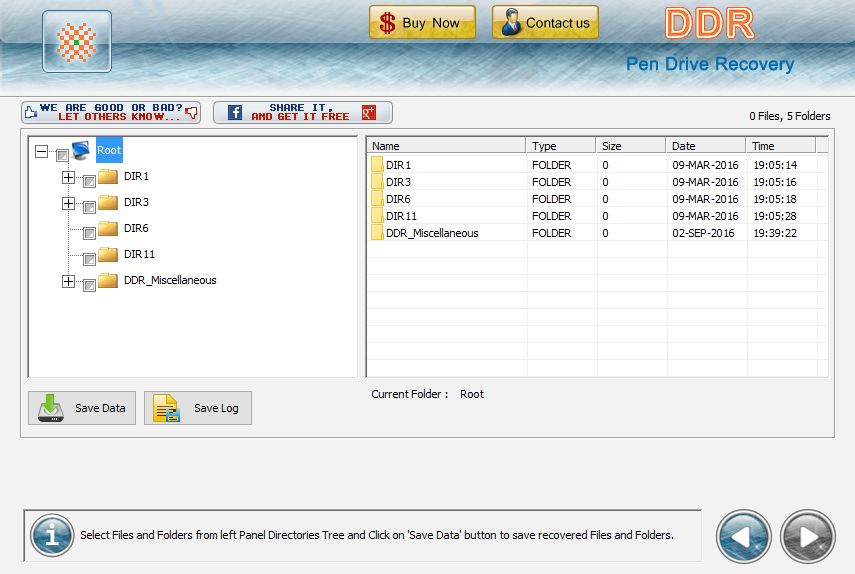

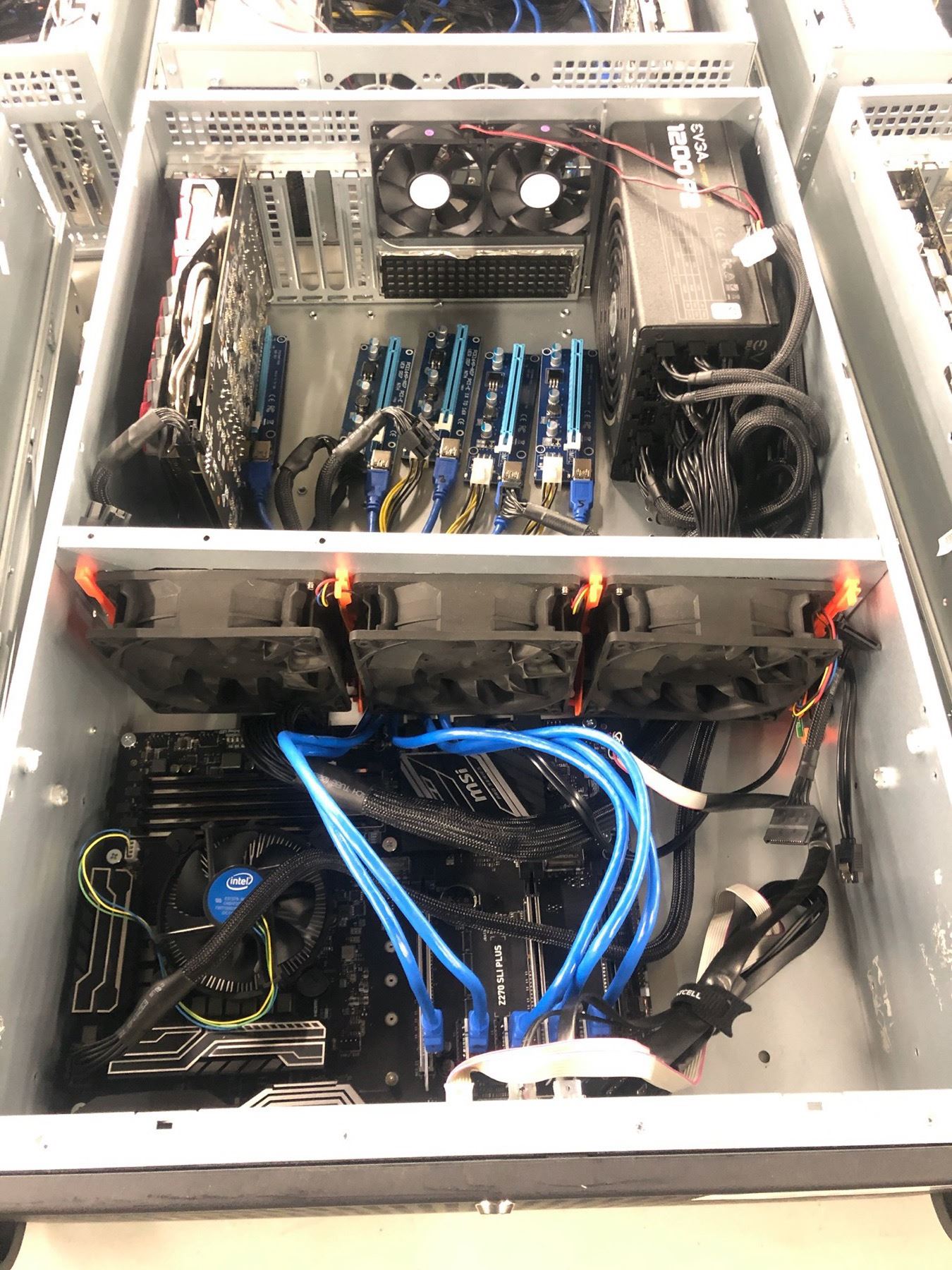

Remember that a NORMAL redundancy just needs two copies. So, with everything running correctly, the data from my databases will be spread two failgroups (this is just a representation and not correct representation where the blocks from my database are): And In this server, I have three databases running, DBA19, DBB19, and DBC19. The version for my GI is 19.6.0.0, but this can be used from 12.1.0.2 and newer versions (works for 11.2.0.4 in some versions). SYSTEMIDG01 SYSTEMIDG01 SYSI01 ORCL:SYSI01 SQL> select NAME,FAILGROUP,LABEL,PATH from v$asm_disk order by FAILGROUP, label If two failures occur, probably, I will have data loss/corruption. The redundancy for this diskgroup is NORMAL, this means that the block is copied in two failgroups. Basically your data will be in at least two different failgroups:Įnvironment In the example that I use here, I have one diskgroup called DATA, which has 7 (seven) disks and each one is on failgroup. This represents for Exadata, but it is safe for representation. At ASM, if you do not create failgroup, each disk is a different one in diskgroups that have redundancy enabled. The same idea can be applied at a “normal” environment, where you can create failgroup to disks attached to controller A, and another attached to controller B (so the failure of one storage controller does not affect all failgroups). As an example, this a key for Exadata, where every storage cell is one independent failgroup and you can survive to one entire cell failure (or double full, depending on the redundancy of your diskgroup) without data loss. And can even be better if you group disks in the same failgroups, so, your data will have multiple copies in separate places. The idea is simple, spread multiple copies in different disks. If you want to understand more about redundancy you have a lot of articles at MOS and on the internet that provide useful information. I will not extend about ASM disk redundancy here, but usually, you can configure your diskgroup without redundancy (EXTERNAL), double redundancy (NORMAL), triple redundancy (HIGH), and even fourth redundancy (EXTEND for stretch clusters). Diskgroup redundancy is a key factor for ASM resilience, where you can survive to disk failures and still continue to run databases. MOUNT DDR RECOVERY HOW TOCheck in this post how to use the undocumented feature “ mount restricted force for recovery” to resurrect diskgroup and lose less data when multiple failures occur. Survive to disk failures it is crucial to avoid data corruption, but sometimes, even with redundancy at ASM, multiple failures can happen.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed